📖 AgenticQA Documentation

Welcome to AgenticQA documentation. This guide covers the architecture and implementation of AI-native test automation systems combining LLMs, RAG, and Agentic AI with Playwright.

This documentation evolves with projects. Subscribe to YouTube @AgenticQA for tutorials and updates.

🏗️ Architecture Overview

AgenticQA follows a three-layer architecture that separates AI planning from deterministic execution for CI/CD reliability.

Three-Layer Architecture

NO LLM calls in Layer 3 - EVER. This ensures 100% deterministic CI/CD execution. Same intent = Same actions = Reproducible results.

⚙️ Tech Stack

🧠 AI/ML Stack

🎭 Automation Stack

🐍 Backend Stack

🚀 DevOps Stack

RAG Pipeline

Retrieval-Augmented Generation (RAG) enhances LLM responses with relevant context from your documentation, eliminating hallucinations.

RAG Pipeline Flow

Embeddings

How Embeddings Work

Agentic AI

MCP Server

Available MCP Tools

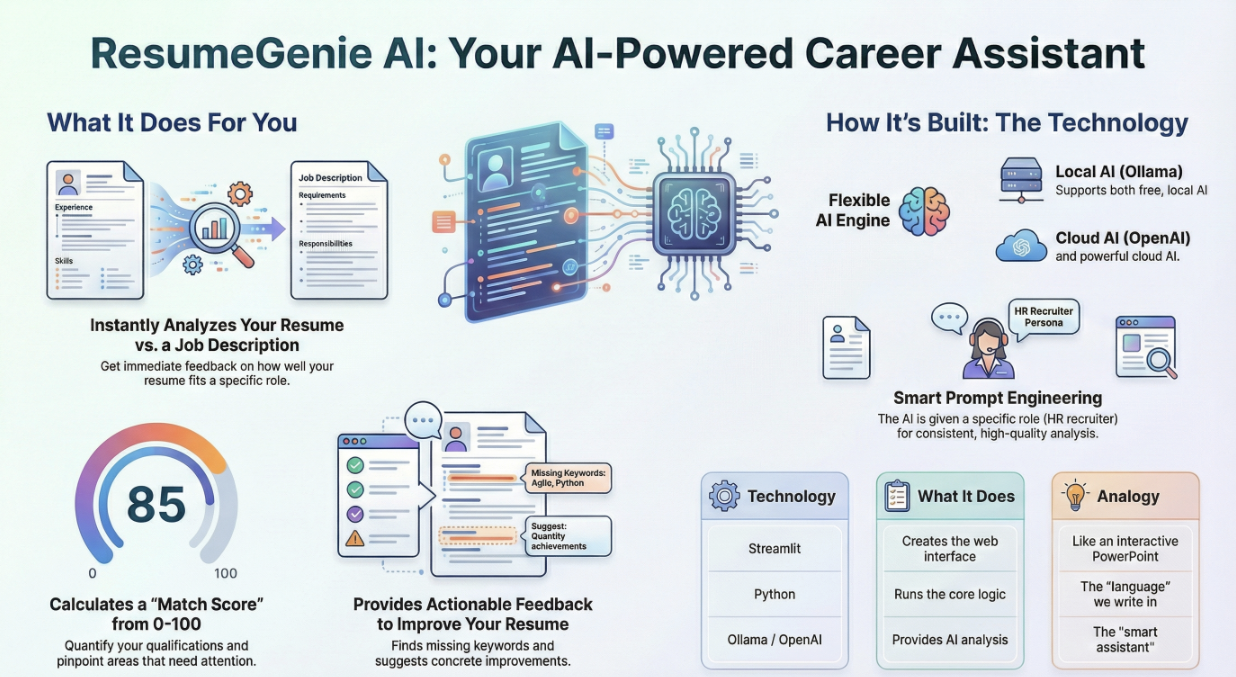

ResumeGenie AI

What It Does

- Analyzes resume vs job description

- Calculates match score (0-100)

- Provides actionable feedback

- Identifies missing keywords

Tech Stack

- Streamlit (Web UI)

- Python (Core Logic)

- Ollama / OpenAI (AI Analysis)

- Smart Prompt Engineering

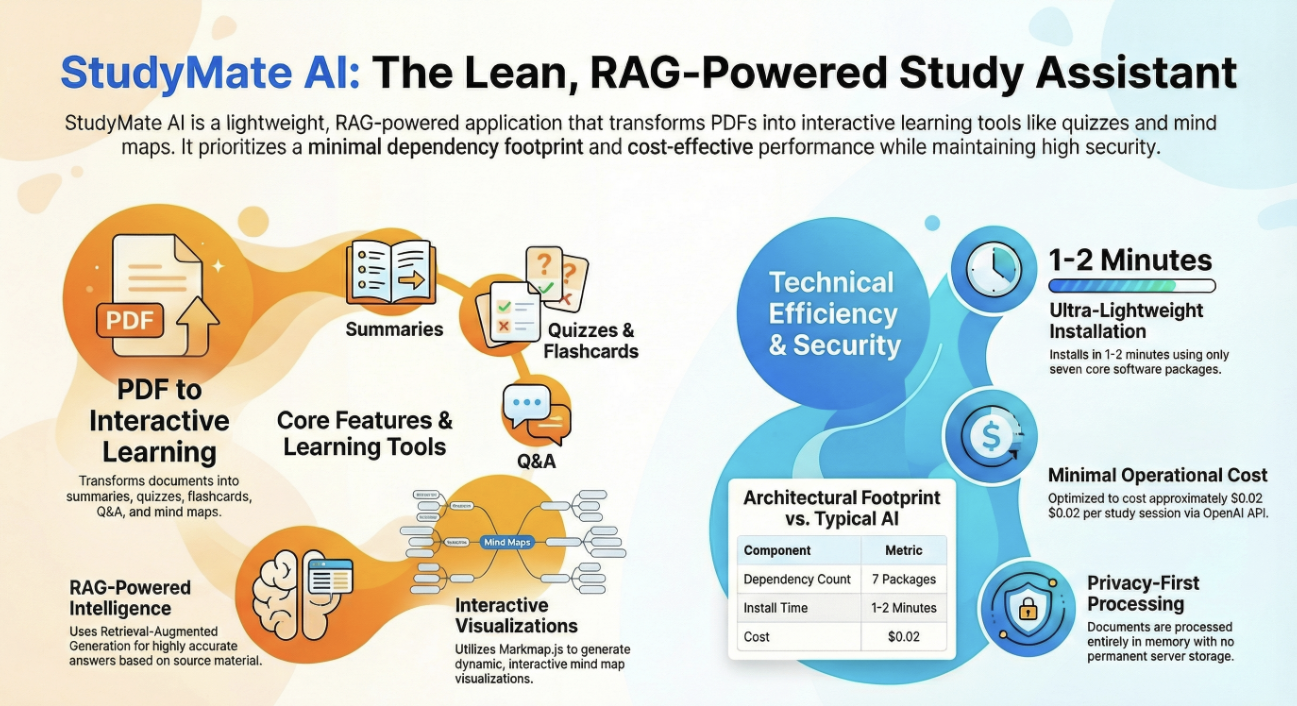

StudyMate AI

What It Does

- Transforms PDFs into interactive learning tools

- Generates summaries, quizzes, flashcards & Q&A

- RAG-powered intelligence for accurate answers

- Creates interactive mind map visualizations

Tech Stack

- 7 Core Packages (Ultra-lightweight)

- Markmap.js (Mind Map Visualizations)

- RAG (Retrieval-Augmented Generation)

- OpenAI API (~$0.02/session)

- Privacy-first in-memory processing

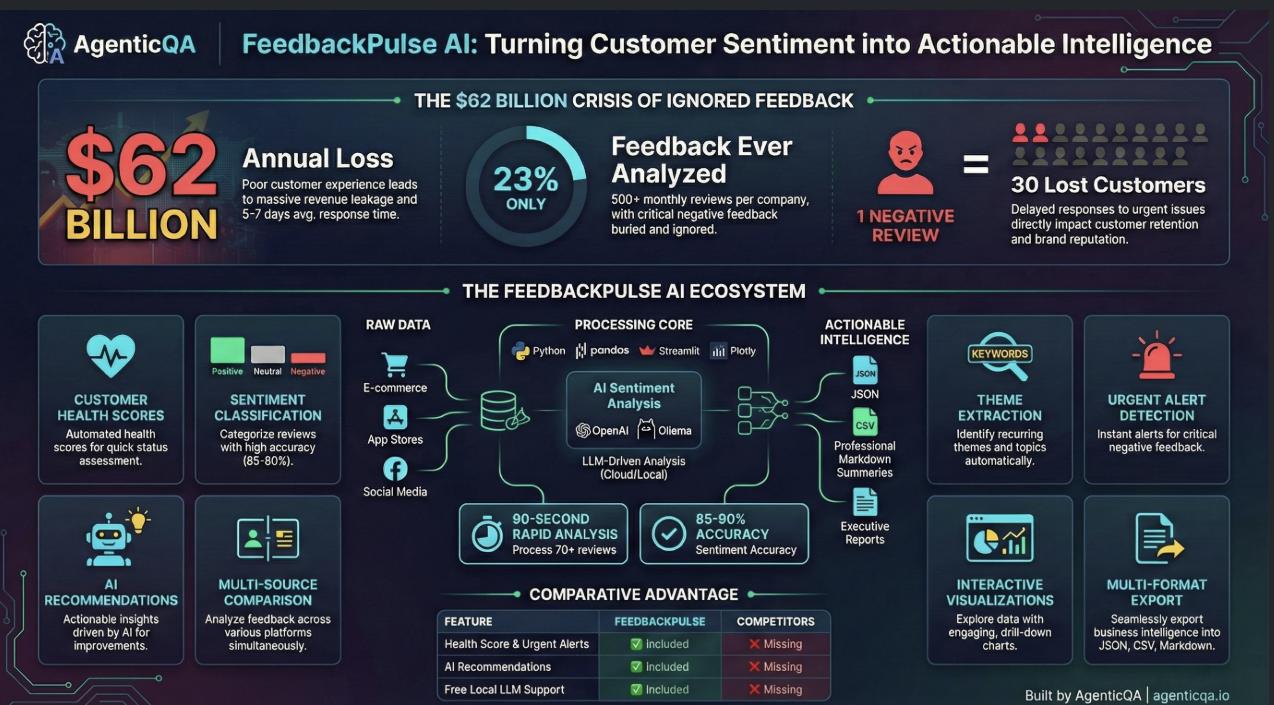

FeedbackPulse AI

Turning Customer Sentiment into Actionable Intelligence

What It Does

- AI Sentiment Analysis with 85-90% accuracy

- Customer Health Scores for quick assessment

- Sentiment Classification (Positive/Neutral/Negative)

- Theme Extraction - identifies recurring topics

- Urgent Alert Detection for critical feedback

- Multi-Source Comparison (E-commerce, App Stores, Social)

- Interactive Visualizations with drill-down charts

- Multi-Format Export (JSON, CSV, Markdown)

Tech Stack

- Python (Core processing engine)

- Pandas (Data manipulation & analysis)

- Streamlit (Interactive web interface)

- Plotly (Interactive visualizations)

- OpenAI / Ollama (LLM-driven analysis)

⚡ Key Metrics

- 90-second rapid analysis (70+ reviews)

- 85-90% sentiment accuracy

- Free Local LLM Support included

MeetingMind AI

Reclaiming the $37 Billion Lost to Unproductive Meetings

What It Does

- Risk & Blocker Detection with severity levels

- Real-Time Meeting Cost Calculator

- Ask AI (Natural language Q&A on meetings)

- AI Action Items - automates takeaways & follow-ups

- Sentiment Analysis for participant mood

- Risk & Cost Tracking dashboard

Tech Stack

- Local AI Engine (Privacy-first processing)

- Python (Core backend logic)

- Streamlit (Interactive dashboard UI)

- Ollama (Free local LLM provider)

- NLP Processing (Advanced text extraction)

💰 Enterprise Power, Zero Cost

- MeetingMind AI: FREE

- Competitors (Fireflies/Otter): $5,000+

Interview Prep

Architecture Decision Records

Decision: LLMs generate test intents (JSON), deterministic code executes them.

Rationale: CI/CD tests must be 100% deterministic.

Decision: Separate Intent → Validation → Execution.

Key Rule: NO LLM calls in Layer 3 (Execution). Ever.

Decision: Use RAG (not fine-tuning) for domain knowledge.

Benefits: Instant updates, lower cost, transparent retrieval.